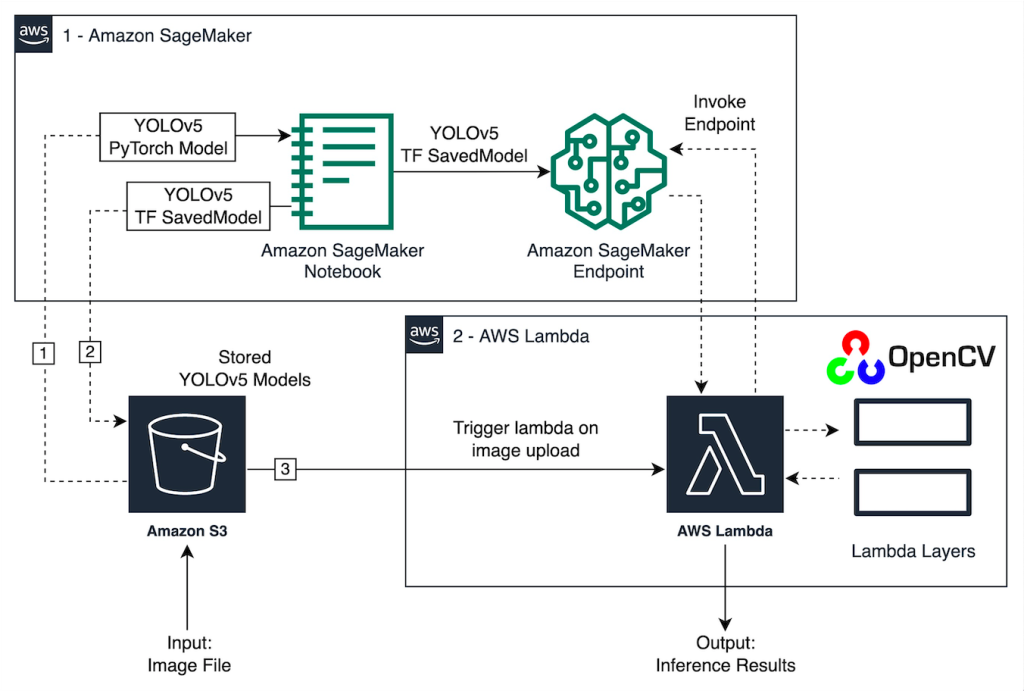

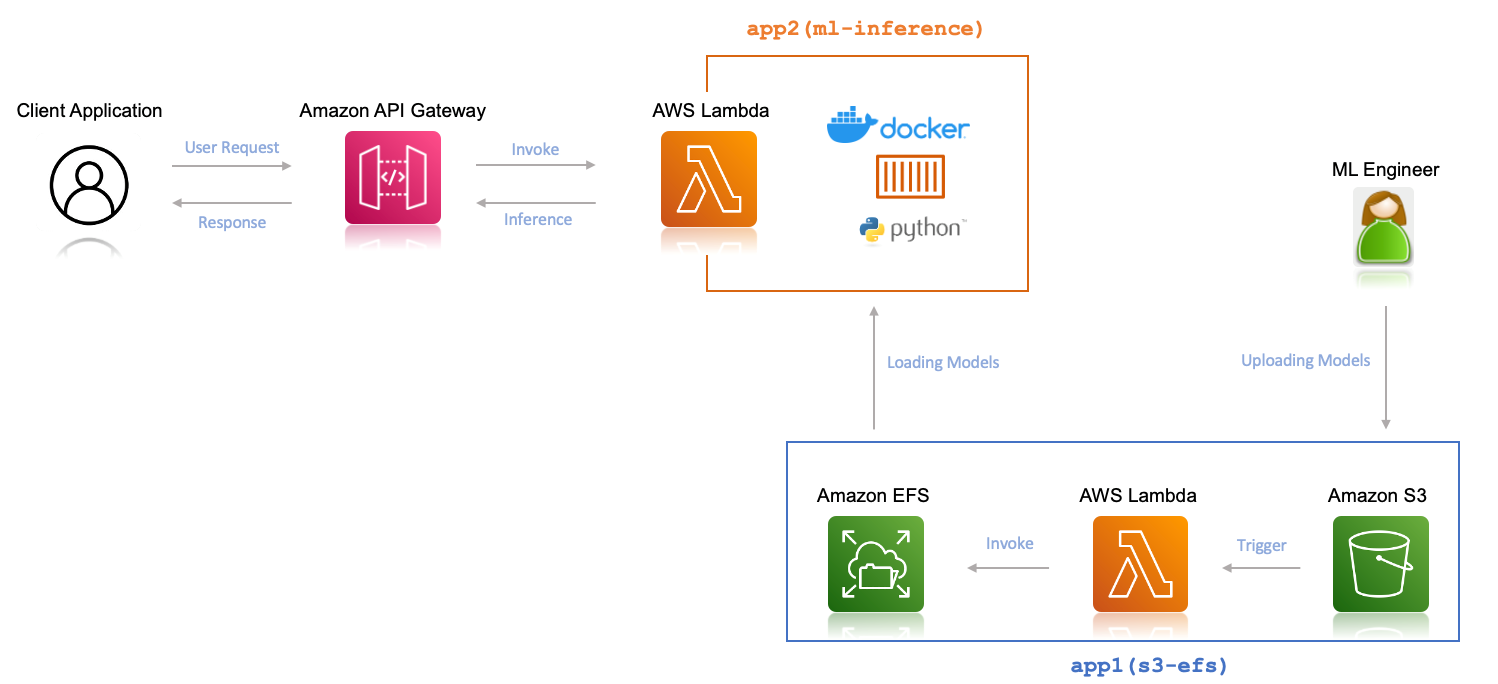

Deploy multiple machine learning models for inference on AWS Lambda and Amazon EFS | AWS Machine Learning Blog

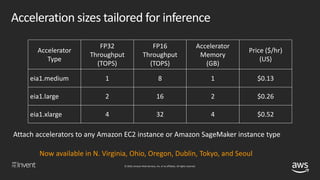

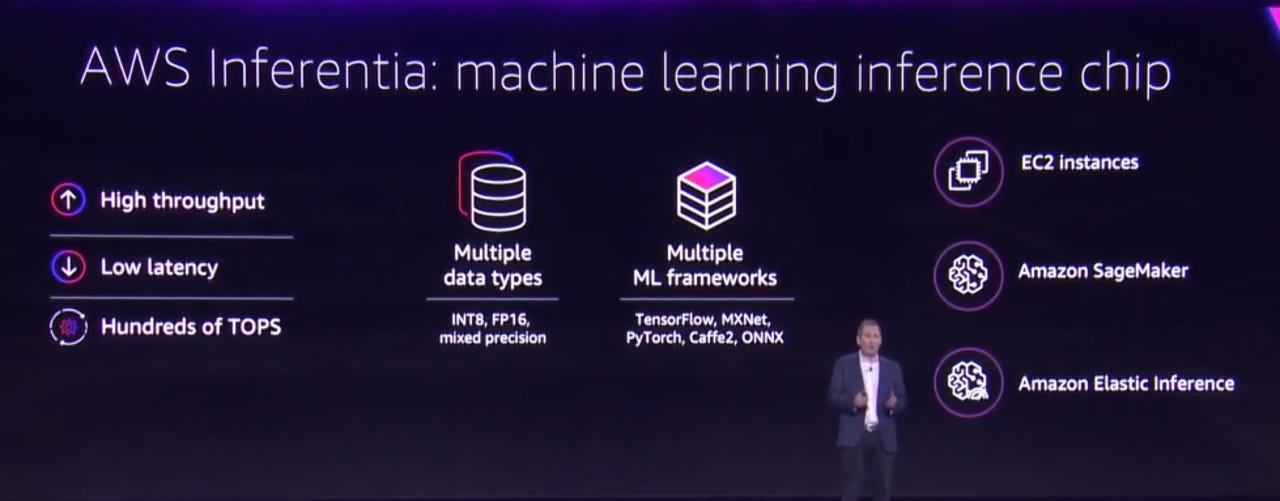

![NEW LAUNCH!] Introducing Amazon Elastic Inference: Reduce Deep Learning Inference Cost up to 75% (AIM366) - AWS re:Invent 2018 | PPT NEW LAUNCH!] Introducing Amazon Elastic Inference: Reduce Deep Learning Inference Cost up to 75% (AIM366) - AWS re:Invent 2018 | PPT](https://image.slidesharecdn.com/new-launch-introducing-amaz-dc7595e2-98da-40f8-aaa2-895420541d29-457215190-181202043444/85/new-launch-introducing-amazon-elastic-inference-reduce-deep-learning-inference-cost-up-to-75-aim366-aws-reinvent-2018-3-320.jpg?cb=1667365044)

NEW LAUNCH!] Introducing Amazon Elastic Inference: Reduce Deep Learning Inference Cost up to 75% (AIM366) - AWS re:Invent 2018 | PPT

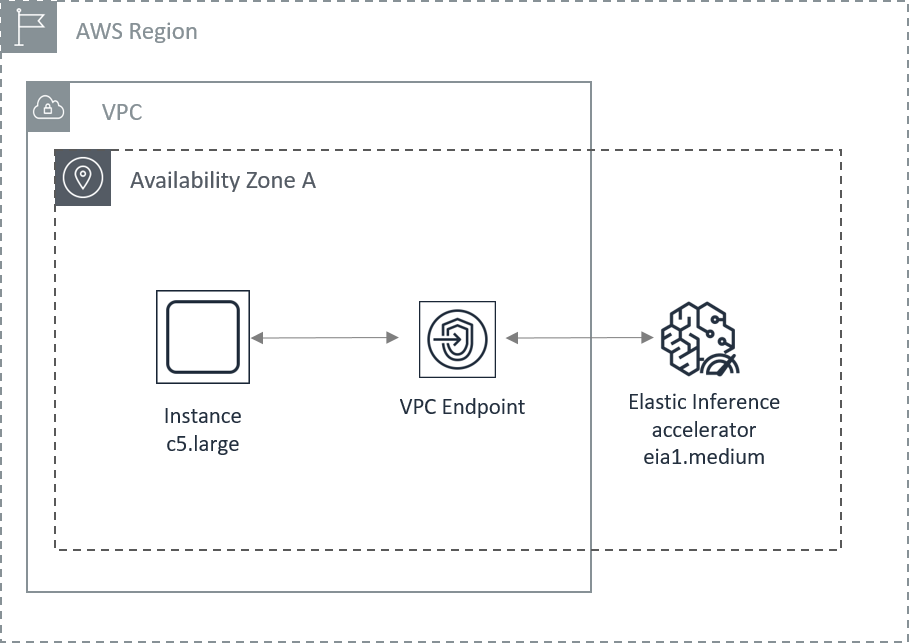

Using Fewer Resources to Run Deep Learning Inference on Intel FPGA Edge Devices | AWS Partner Network (APN) Blog

Amazon Web Services on X: "Introducing Amazon Elastic Inference: Reduce deep learning costs by up to 75% with low cost GPU-powered acceleration! #reInvent https://t.co/AY630jDINb https://t.co/cf2gBu6P9R" / X

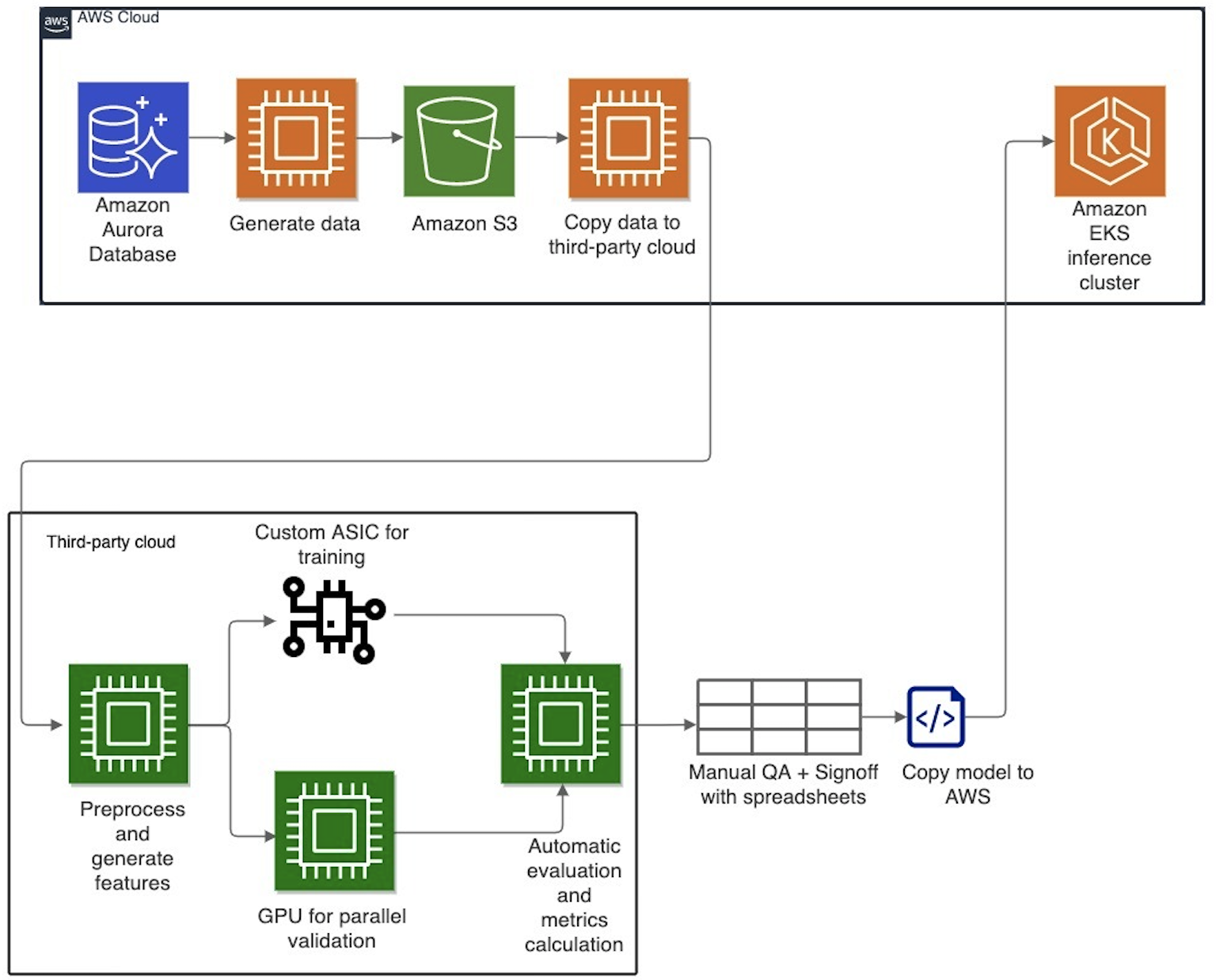

Evolution of Cresta's machine learning architecture: Migration to AWS and PyTorch | Data Integration

Maximize TensorFlow performance on Amazon SageMaker endpoints for real-time inference | AWS Machine Learning Blog

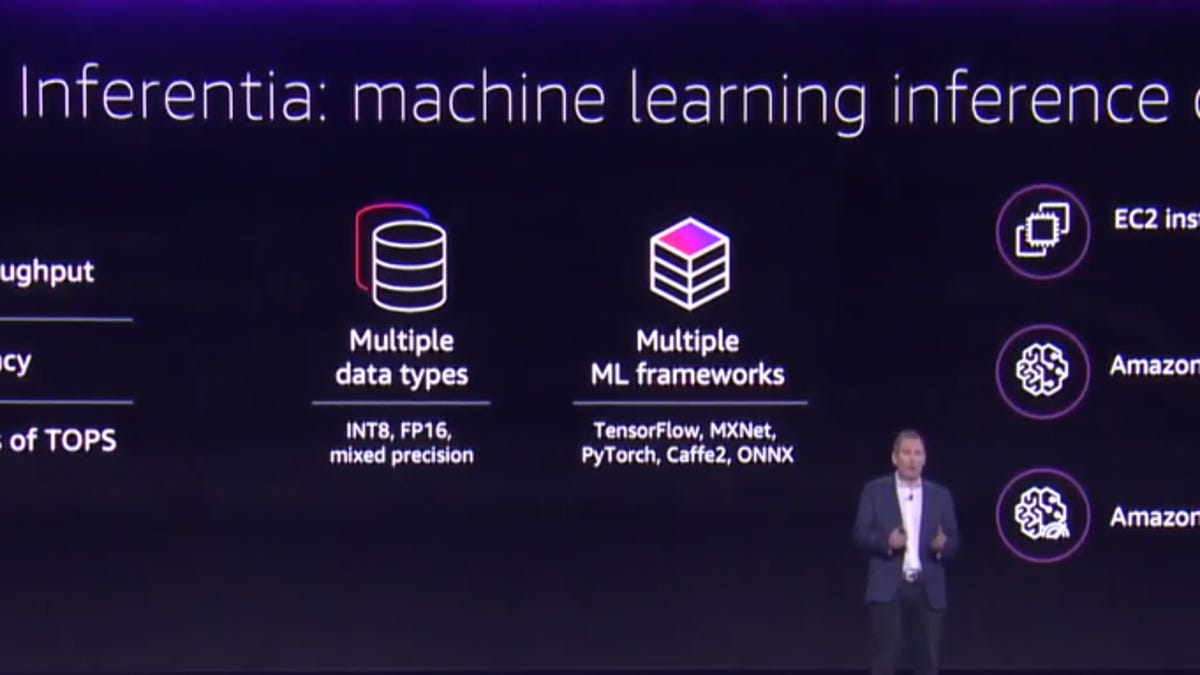

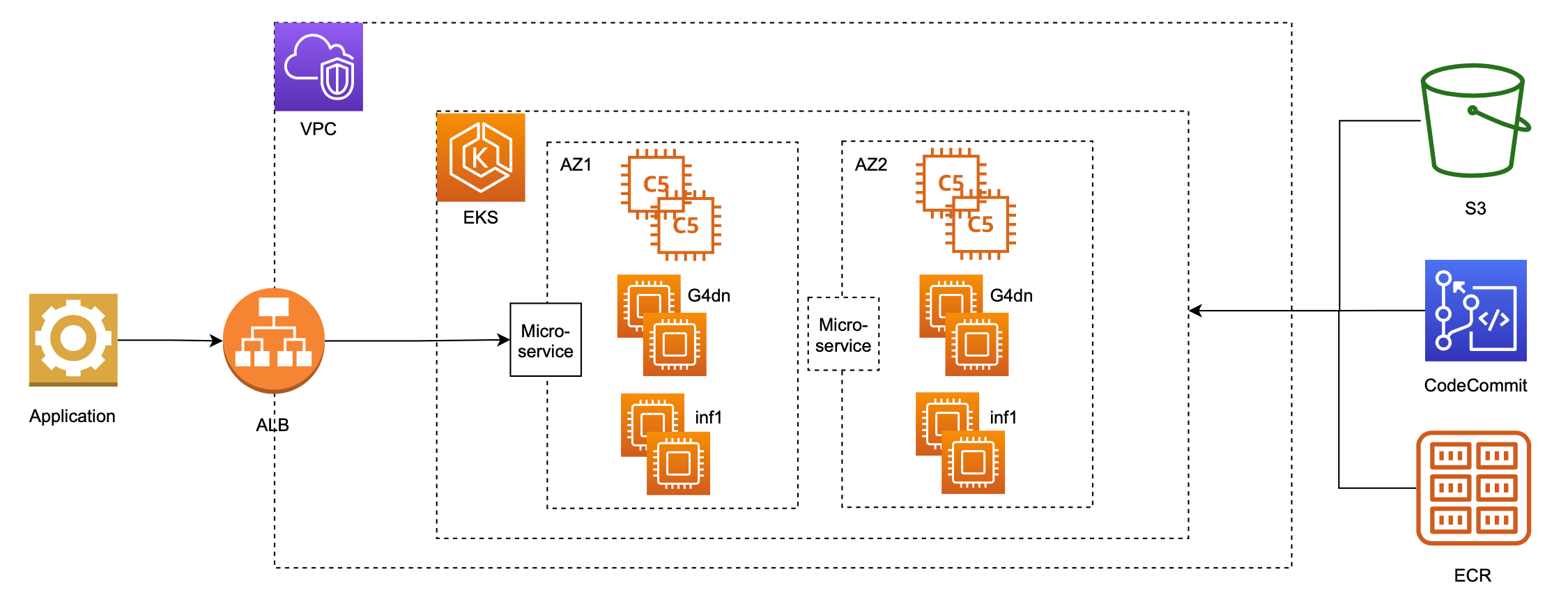

Serve 3,000 deep learning models on Amazon EKS with AWS Inferentia for under $50 an hour | AWS Machine Learning Blog